James Huang

How much information would loss after series of translations? What would machine interpret with series of translations? How would the interpreted result different from our expectation and cognition?

http://www.jhjameshuang.com/lost-in-translation/

Description

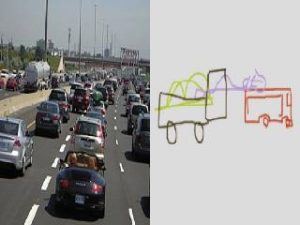

As artificial intelligence developing, more and more people worry about machines will replace humans. Although machine learning seems to do some promising works, it still needs effort to catch up human in complicated works, especially those tasks that have no correct answers or need to be interpreted. Moreover, we do not want a machine learning model to have 100% accuracy or it cannot learn from new data. Also, machine learning can be treated as a translation process. As the process of translation increases, the bias is also increased. When the process goes on, the imperfection leads to information loss, and the loss leads to misunderstanding. At the end the result deviates from our expectations. Therefore, I designed an interactive experience which has a recursive process for human and machine to interpret each other’s results. Human needs to come up with a sentence to describe an image generated by machine and the machine will do multiple machine learning translations from the description from human to a sketch and then to an image in each round of process.

Classes

Thesis