Abstract

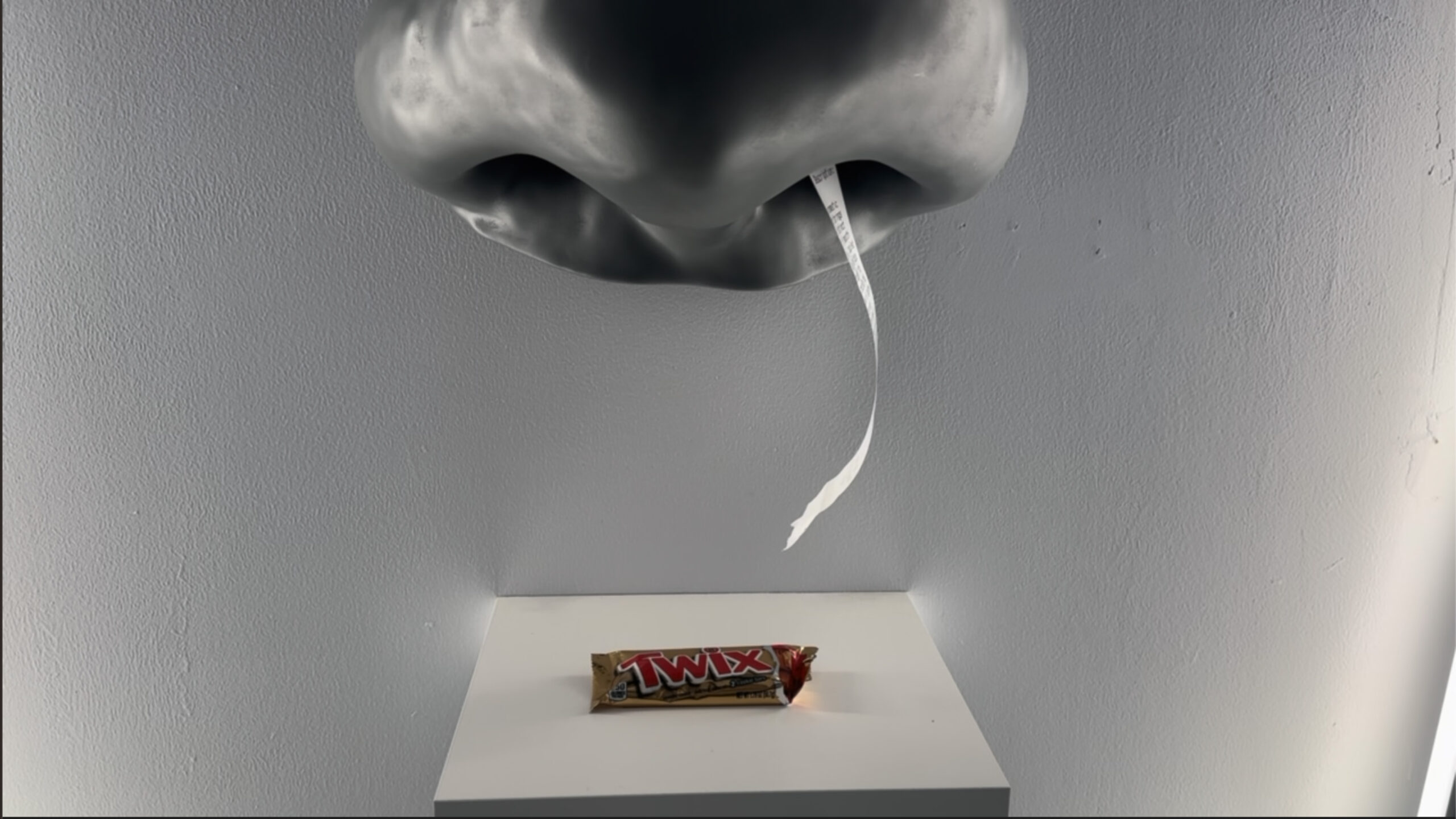

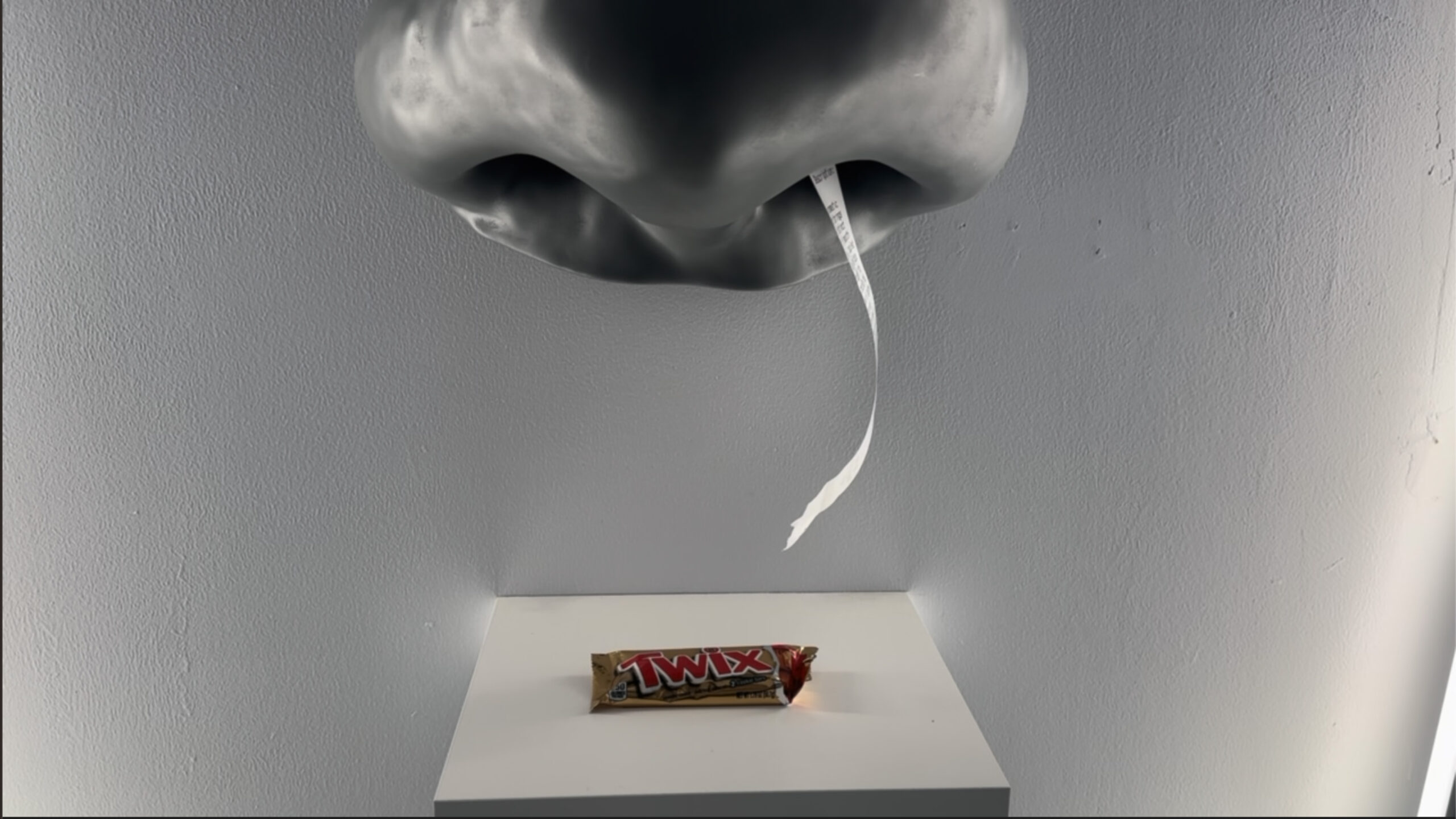

Adnose is an interactive installation that utilizes machine learning and artificial intelligence to predict the scent of various objects. The sculpture is a 3D replica of the nose of the artist. It serves as a reminder of the challenges faced by the anosmic community, who may have difficulty identifying and appreciating scents due to a lack of olfactory function. By providing a unique sensory experience accessible to all, Adnose aims to raise awareness and promote understanding of the challenges faced by individuals with anosmia.

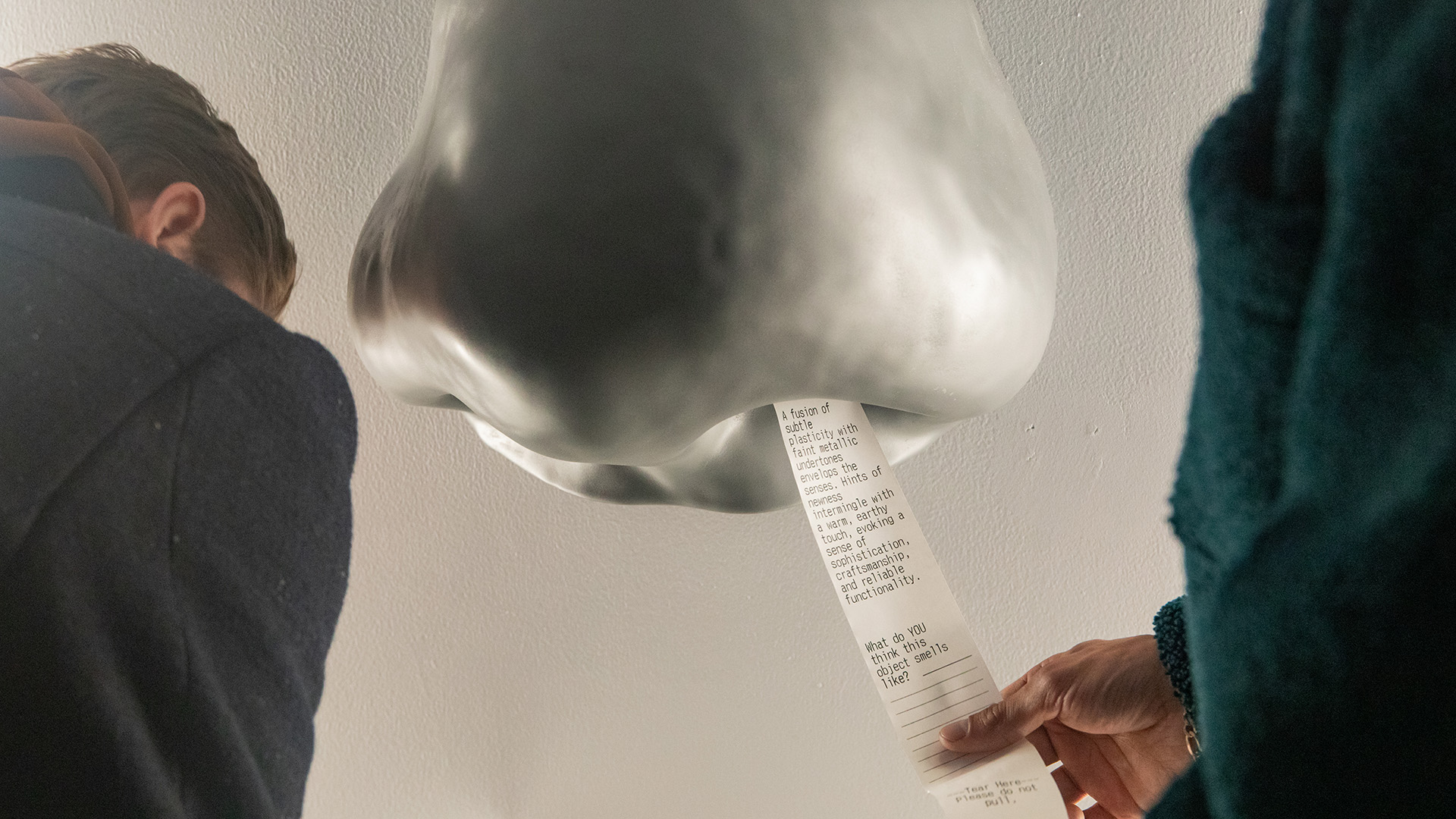

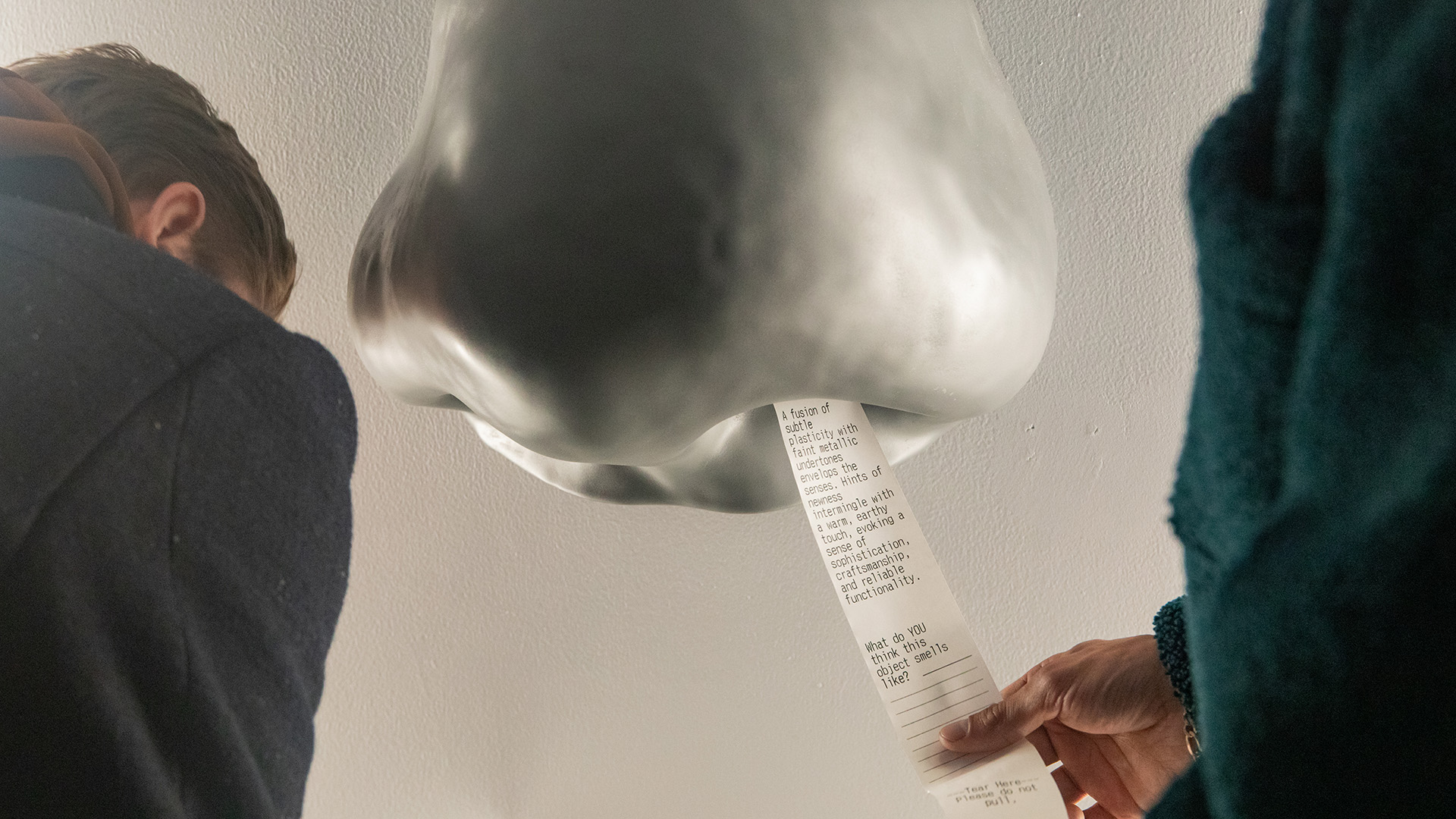

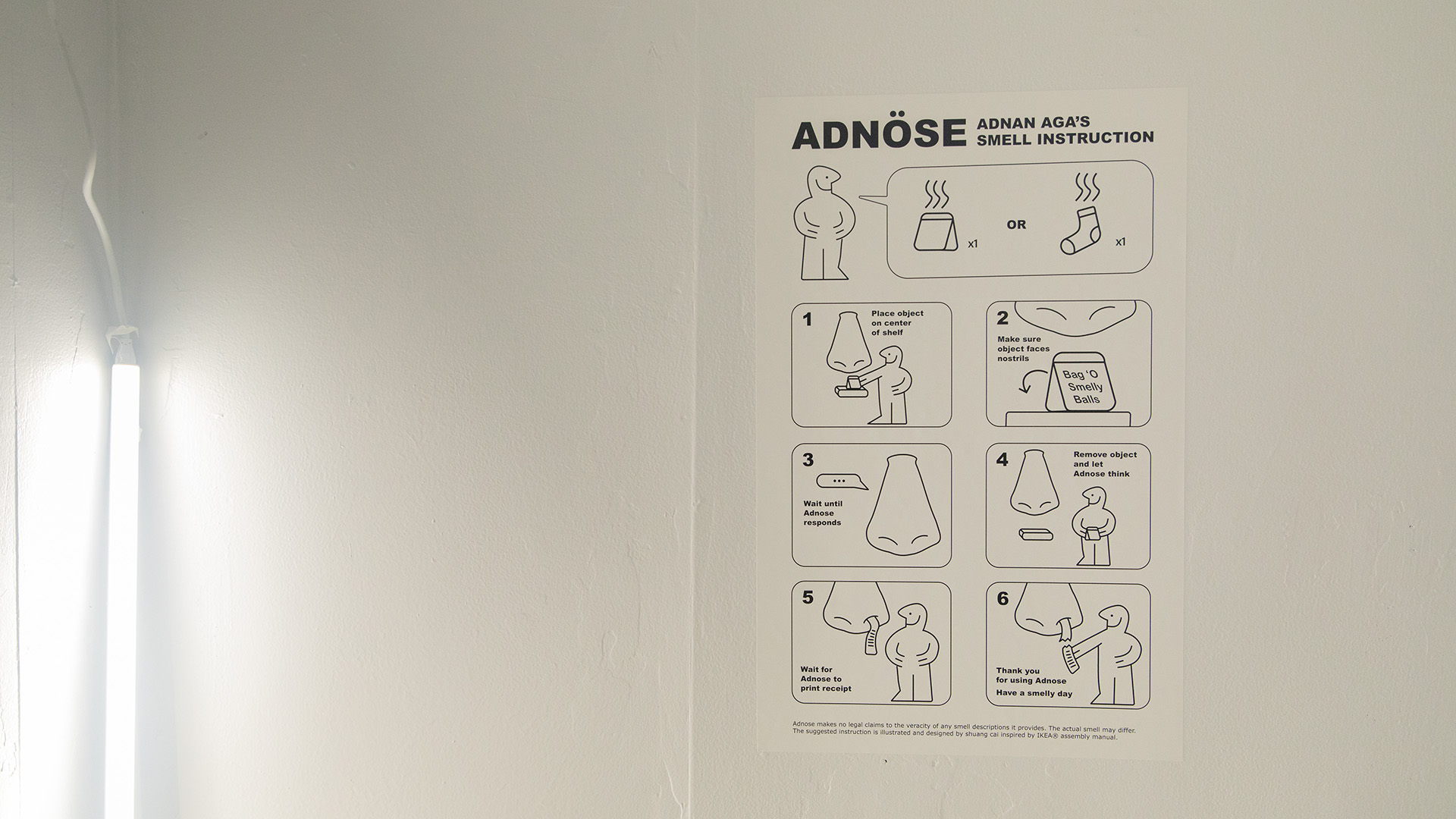

The piece is a working study that attempts to connect different AI tools, which include image recognition and natural language processing algorithms. As the user places an object below the Adnose sculpture, it captures an image of the object, analyzes it using an image recognition algorithm, and then sends that information to a large language model to search for any relevant context clues related to the object's scent. Adnose then generates a prediction of the smell, which is communicated to the user through audio (spoken) and visual (printed) interfaces.

The installation showcases the potential of combining AI and a large sculptural form to create an engaging interaction. This piece's unique approach to scent prediction conveyed through a visual and olfactory sculpture makes it a thought-provoking and impactful installation. The insights gained through the building of this project could be applied to other areas of AI and machine learning to advance our understanding of the human senses and their interaction with technology.

Research

My thesis research took me down multiple roads. My initial thesis question began with the premise “How can I (A person with Anosmia) understand the sense of smell”. This took me down many different roads in trying to understand this hidden dimension. By listening and consuming media regarding olfaction, reading scientific papers, and having conversations with medical professionals, I attempted to gain an understanding of how smell works. I was captivated by “The Emperor of Scent”, a novel that describes the journey of a scientist regarding his theory of olfaction. I spoke with the scientist in question, Dr Luca Turin about his work, my condition, and what I could do in regards to my initial thesis question and he proposed an interesting experience in Virtual Reality that would visually represent the absence of smell. Midway through my process down this path, I realized that to be able to create an understanding of smell, I would have to do much more research and start looking at it in smaller bite-sized chunks. My surface level understanding of it caused me to pivot and therefore the next step was to try and understand it through another lens similar to mine. I reached out to other anosmic individuals and started having conversations about what smell meant to us. How important it is, what we feel like we gain or what we feel we’re missing out on. One that stuck out to me was the descriptions of the smell of objects. Not being able to smell means that we as anosmics have to rely on others to give us an accurate smell description of the world around us. Using vocabulary that accentuates the nuances of the odor and makes it more accessible to a smell disabled community is a central focus of Frauke Galia’s practice. This became the seed of Adnose and how it functioned.

Technical Details

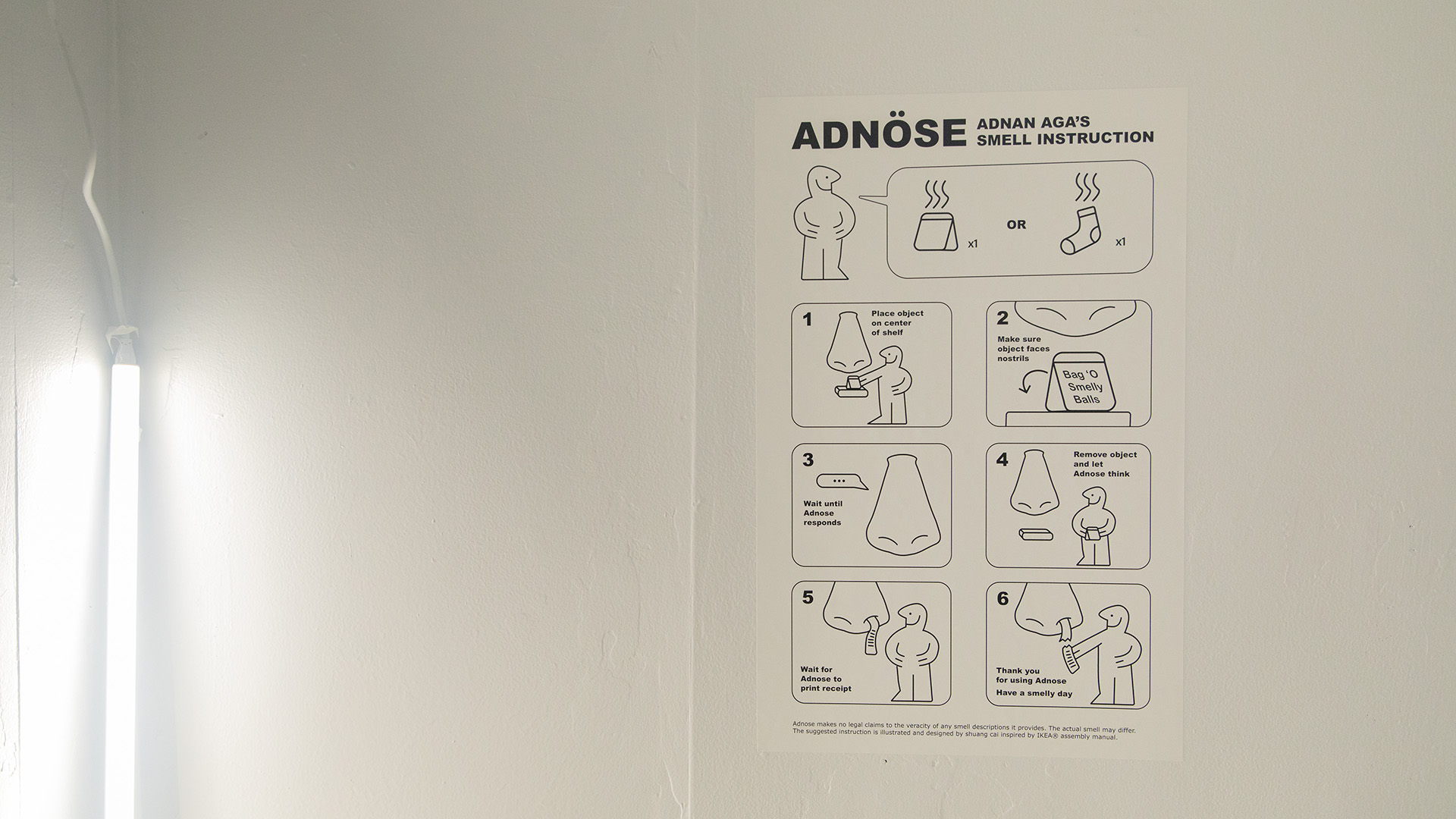

The technical operation of this came down to the following:

A distance sensor detects that the user is placing something underneath the nose. After being present for a certain amount of time.

Adnose then asks users to place their item on the shelf below it.

A Raspberry Pi Camera with a fisheye lens then takes a picture of the object.

This gets sent to Google Images, processed, and then we are returned with the first result.

We then send the result to GPT-4 to generate a description of the smell of the object.

The returned description is then said aloud by a synthesized voice on Eleven Labs - A voice cloning and synthesizer service, as well as printed on a receipt by a thermal printer within the nose which then merges from one of the nostrils.