Chunhan Chen, Tianyi Xie

Put the real time weather from your hometown into a jar and bring it with you all the time.

http://tianyix.hosting.nyu.edu/blog/ipc/final-proposal-weather-in-a-jar/

Description

Design Concept:

Have you ever lived far away from home and get homesick? What if there’s an object that could ‘physically’ put your hometown real-time weather into a jar and put it on the table, which allows you to see your hometown weather anytime at a glance.

Some people who lived far away from home tends to bring something from home as a reminder or representation of their connections with hometown. And the goal of ‘weather in a jar’ was to make this connection even stronger. A real-time weather status of a city reflects a very specific moment & location which could create a unique connection between a person and his/her hometown disregarding the distance of physical presentness and time zone.

Process:

Right now, I have the ‘weather jar’ and pepper’s ghost effect worked, assembled and ready to show, and inspired by Chunhan Chen’s pepper’s cone ICM final project, we are hoping to combine our projects and display the real-time weather effect in 3D.

Next Step:

Technology attempting:

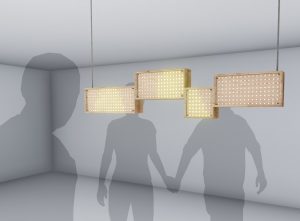

In order to augment the visual display, a web version of Pepper’s Cone (originally created by Luo, Xuan etc in Unity) is developed to make the 360-degree hologram with lower cost. Technologically, the Pepper’s Cone For Web exploits customized shaders in GLSL, pre-distortion with image processing, 3D scene building with three.js and development in purely Javascript. In order to real-timely render the scene to a distorted texture, a buffered scene is used for storing models and environmental settings as a buffer texture. Based on that, vertex shaders and fragment shaders would wrap the scene utilizing an encoded map.

Classes

Introduction to Computational Media