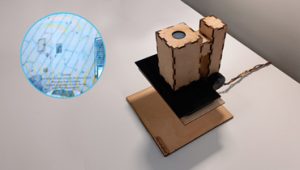

A microhabitat is a virtual open-door to a tiny habitat that can be seen only through a microscope.

Jung Huh

https://vimeo.com/488923446

Description

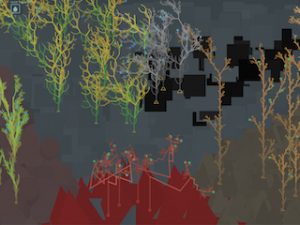

A microhabitat is a virtual open-door to a tiny habitat that can be seen only through a microscope. It welcomes audiences to a tiny room of a person living in NYC. The tour is not as big and fancy as you see in many of YouTube's’ open doors. Comparing the size of the room to that of other open door videos, it may feel like looking into microscope slides where details are only visible via a microscope.

The work brings the housing problem the 20s and 30s are facing. Finding a habitat has become more difficult. The more you get close to the central part, such as NYC or Seoul, the more expensive rents get making it tougher to find a place, and the smaller the room becomes. However, no matter how small the room may be, there lives a person with their own unique story and a big dream.

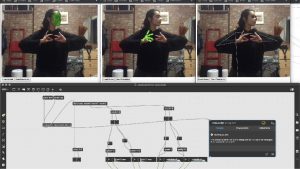

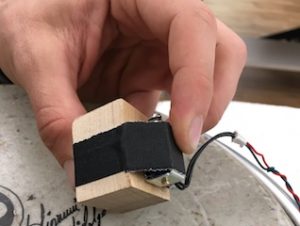

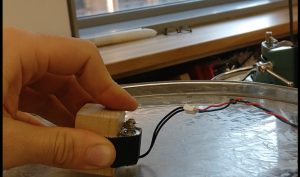

The audience peeks into the small room of a person through a microscope that has two controllers. Using the knob on the right side, a stage controller, the audience can look around the room as it rotates the camera situated at the center of the microhabitat. Using the knob on the left side, coarse adjustment, the user can look into the details on specific objects located in the room. Each object contains a personal story of the person living there as if you see the product details of objects that YouTube has in one's open-door video.

Virtual Experience

https://editor.p5js.org/jhuh3226/present/ErsWzJ5mn

ITPG-GT.2301.00008, ITPG-GT.2048.00003

Intro to Phys. Comp., ICM – Media

Narrative/Storytelling,Social Good/Activism