Tell me your feeling, I hear, and I feel you.

Eden Chinn, Rui Shang

https://youtu.be/AsxloEstQ4U

Description

As humans, our existence is defined by different emotional states. When we feel an emotional impulse, it's like a ripple is dropped inside of us. This ripple flows outward and is reflected in how we perceive the world around us, as well as how we act within it.

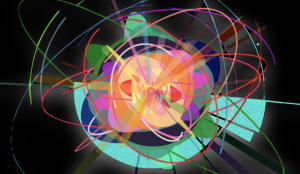

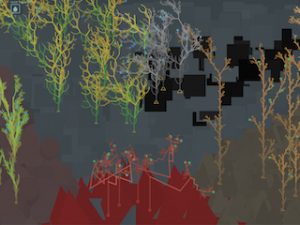

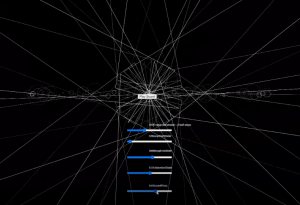

For this project, we wanted to visualize emotional states using colors, shapes, and sounds in a poetic way.

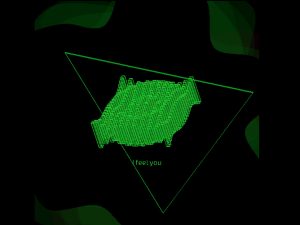

The first thing we did is dividing all emotion words into 6 classifications: happy, content, sad, angry, shocked, afraid and then used p5.speech to recognize words instead of training words myself in the teachable machine because it’s far more accurate and for now this project can recognize over 110 emotion words.

We create a flowing 3d object and use sin() function to generate a beautiful ripple. More importantly, we generate multiple filters for one song in response to different emotions, and the amplitude of the song will affect the frequency of the ripple. For the visual part, we believe matching colors and custom shapes to different emotion words based on color and shape psychology could give people an immersive experience.

Tell me your feeling with one word.

I hear you, I feel you.

ITPG-GT.2048.00007

ICM – Media

Machine Learning,Music