Cristobal Valenzuela

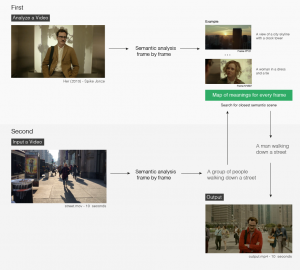

A tool to get similar semantic scenes from a pair of videos.

https://github.com/cvalenzuela/scenescoop

Description

Scenescoop is a tool to get similar semantic scenes from a pair of videos. Basically, you input a video and get a scene that has a similar meaning in another video. Scenescoop uses the im2text tensorflow model on a frame by frame basis over the input and transfer video. It then uses Spacy to average the word vectors in a sentence and Annoy to create an index for fast nearest-neighbor lookup.

Classes

Automating Video