Idith Barak, Jacky Chen, Tsimafei Lobiak

A living plant made out of fabric.

https://wp.nyu.edu/tlobiak/2018/11/30/weeks-10-12-final-project-progress/

Description

Team:Idit Barak, Jacky Chen, Timothy Lobiak

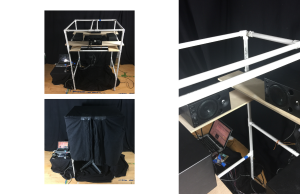

Our project is called the Giving Plant; it is a white on white soft sculpture inspired by the iconic bird of paradise flower. Onlookers may use our custom built water can to water the plant. When that happens, visual cues will occur to guide the users to complete the sequence. At the end of the sequence, the majestic bird of paradise flower will bloom and emit a relaxing smell in the form of a cooling mist.

The two water cans each uses an absolute orientation sensor (BNO055) to detect the change in orientation. When the cans are pointed downward, three 12V LED’s are triggered. The user needs to point the mouth of the water can at the root of the plant to “water” the plant with light. This triggering the LDRs embedded in the planter. There are also sealed water capsules built into the water can to create

When watered, the LED strips embedded in the stem of the plant will display segments of LEDs moving up the stem, feeding “light” into the leaves and the flower. When the leaves receive “enough” light, it will gradually light up from the bottom to the top.

After the leaves are fully lit, the shape memory alloys (SMA) will be triggered by running current through them. This allows the SMA to change its shape, and as a result, the flower will bloom. Mist will be pushed out of a mist making chamber, by an air pump, through a silicon tubing; essential oil will be in the mix of the mist, that helps to produce a lovely scent. After a short pause, the mist will cease and the flower will close its petals by triggering another set of SMA pulling in the opposite direction.

Classes

Introduction to Physical Computing