Brett Stiller

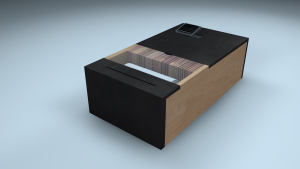

A pair of 'Partnered' I/O's that facilitate and make tangible the simple human exchange of a long-distance 'Good Night'.

http://www.brettstiller.com/procul

Description

Technology makes promises to connect us and service human communication in a way never before possible. In theory and for example, having a long-distance relationship should now be as fulfilling as one that's face-to-face.

But what happens when the tech dries up and becomes an obstacle, and resentment toward our devices as conduits for our relationships kicks in?

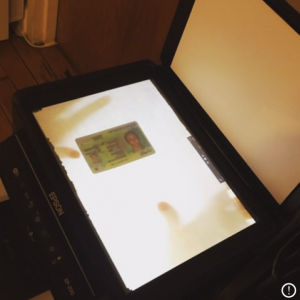

Utilizing the untethered freedom of a conversational user interface (CUI), speech-to-text and the internet, these ‘coupled’ objects take the spoken last thoughts and wishes of one's ‘Good night’, giving form to and delivering them, on waking, to the other.

Individually, these physical receipts, artifacts or tokens may seem trivial as they are collected, used as bookmarks, lost, pinned to the fridge, thrown away or stored in the wallet. However, as they accumulate, they begin to represent something larger. They are a distillation of the sentiment of humans trying to communicate effectively over distance. They are also a stand against the ethereal digitization of our experiences and relationships, the automation of intimacy and our losing grip – so to speak – on things to touch and hold.

Classes

Thesis