Dan Oved

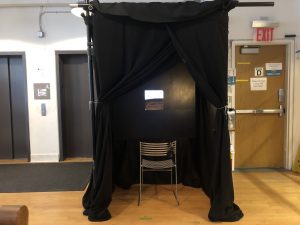

The self-driving human is a device and performance where an intelligent portable agent makes decisions for a person in the real world.

https://www.danioved.com/self-driving-human

Description

As technology increasingly becomes integrated with our daily lives, we rely on it more and more to make decisions for us, from things such as how we should get from one place to the next (Waze), to what we should have for dinner (yelp, tripadvisor), to what content we should see on the internet (social media), to who we should date (dating apps). What would a future feel like when all of our decisions are made by these machines?

The self-driving human simulates this scenario by making choices for the human in areas that are currently not decided by technology. It’s a portable device that detects objects around the person using a camera and machine learning, and gives commands to the user on how to interact with the environment based on an arbitrary algorithm that changes day by day. It allows the person to outsource thinking and decision making to an algorithm that they do not entirely understand.

The agent’s decision space is limited to what the machine has been trained to see. The user does not know what the logic of the algorithms are, and while the machine does know the objective, it does not know if it is good or bad. What happens when the objectives of this machine differ from the objectives of the person its meant to serve? How do the choices made by the algorithm collide with the humans desires when they’re disconnected from the biases and emotions of that the person has learned throughout his or her life?

The device is portable and works totally offline using machine learning on the edge, allowing for real-time response even where there is no internet connection, and maintaining privacy as all data stays on the device.

For the performance it is carried by me in the real world, where I listen to its commands and attempt to do as we’re instructed, no matter how uncomfortable it makes me.

Classes

Thesis

Thesis Presentation Video

https://vimeo.com/331468540/settings