ITP Weather Band is an experimental band creating music, interactive objects, and visuals with weather data collected from a DIY weather station. Come meet the band and learn about our weather system.

Atchareeya Name Jattuporn, Lu Lyu, Cy Kim, Schuyler DeVos, Siyuan Zan, Sihan Zhang, Yiting Liu, Yeseul Song

https://youtube.com/playlist?list=PLivoY25uYfN_zJ3dkhvGiS7i4OoCJO5qt

Description

ITP Weather Band is an experimental band creating music, interactive objects, and visuals with weather data collected from a DIY weather station. We built a DIY weather station system and created experimental instruments that turn the environmental data into music and visuals. We explore new ways of delivering information and stories about our immediate environment through the auditory and visual sense. We’re growing into an open source project.

The band consists of faculties, alums, and graduate students at New York University’s Interactive Telecommunications Program (NYU ITP) and beyond. We started the weather station project in 2019 as a sub-group of ITPower and launched the band in January 2020.

See here for the details and full credits https://github.com/ITPNYU/weather-band.

We had a special collaborator, Yichan Wang (https://yichanwang.me/) pursuing her MFA in Design and Technology at Parsons School of Design, for the weather band website.

At the winter show 2020, we're introducing:

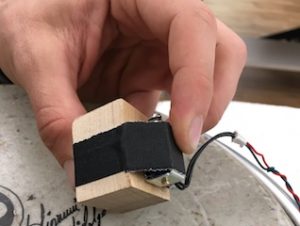

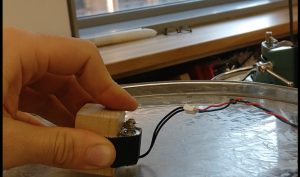

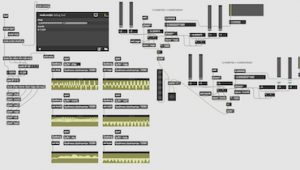

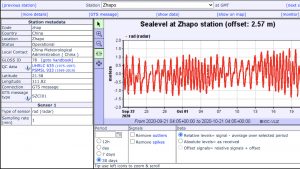

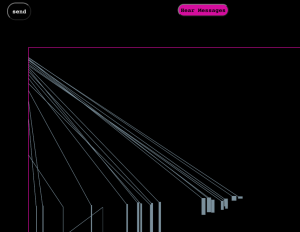

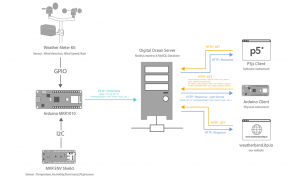

* ITP Weather Station System by ITP Weather Band

A DIY weather station system that posts data to the web to be accessed from anywhere in the world for creative projects.

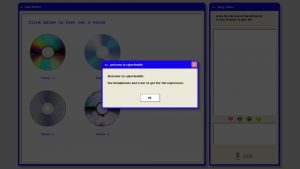

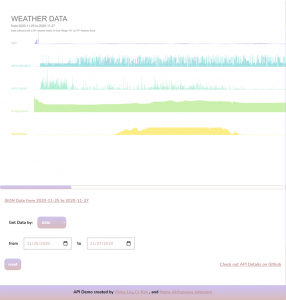

* Weather Data Visualization Interfaces by Yiting Liu, Atchareeya Name Jattuporn, and Cy Kim

On this website, you get to see the visualization of all the data or select a specific part of data based on dates, id numbers, and categories.

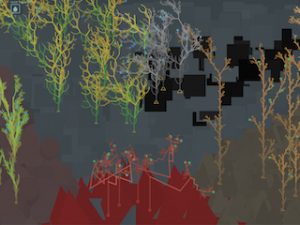

* Weather Band Website by Yichan Wang (New School, visual system design) and Schuyler W DeVos (development).

A visual system and website with a graphical weather mobile that sensitively reacts to the real-time weather data.

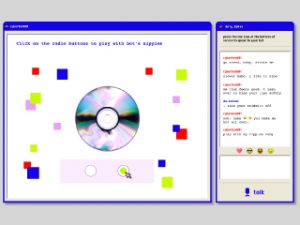

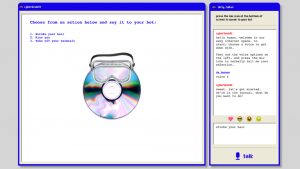

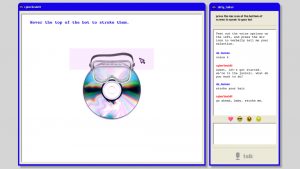

* Weather Instruments made by the band members, including

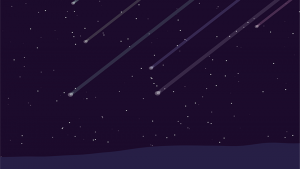

Meteor Shower by Sihan Zhang

: A p5 sketch that generates a meteor shower scene and relaxing music using wind and rain data

Gust Sound by Lu Lyu (shown at a separate spot on Yorb)

https://itp.nyu.edu/shows/winter2020/2-5-miracle-and-gust-sound/

Leaf Dance by Siyuan Zan (shown at a separate spot on Yorb)

https://itp.nyu.edu/shows/winter2020/leaf-dance/

ITPG-GT.2808.001, ITPG-GT.2301.00004, ITPG-GT.99999.001

Understanding Networks, Intro to Phys. Comp., ITP Weather Band

Sound,Sustainability