Guillermo Montecinos

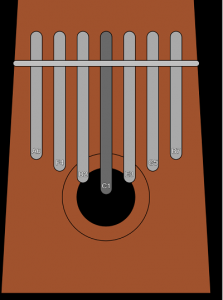

sonAR is an augmented reality sound experience that allows the user to explore music elements in the space.

Description

Thanks to augmented reality, new layers of perception can be built on top of the world. AR technologies have now been integrated to mobile devices, enabling users to see, listen and move around elements that don’t actually exist in the physical world but coexist with us in temporal instances of virtual worlds.

“sonAR” explores the construction of an imaginary sound landscape built from basic elements that are virtually represented in 3D space. The user, from the view field of the mobile screen, can also discover and interact with these sound elements through space.

Classes

Music Interaction Design