Zoe Bachman

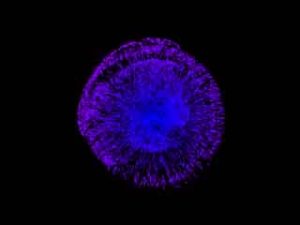

A translation device that allows humans to speak to coral.

http://www.zoebachman.net/itp/?p=774

Description

Aural Reef is a translation device that takes human speech and transforms it into coral reef noise in an attempt to help restore reef populations. 27% of the world's coral reefs has been lost. If present rates of destruction are allowed to continue, 60% of the world's coral reefs will be destroyed over the next 30 years. 2016 is on track to experience the worst coral bleaching events yet – after similar episodes in both 2015 and 2014.

What if we could talk to coral and what if those messages actually did something helpful?

Research has shown that the noisier a reef is, the healthier it is. Scientists are starting to use audio as a way of quickly monitoring the state of a reef. Studies show that coral larvae and young fish are attracted to the sounds of the reef, guiding them to coastal areas and allowing them to identify suitable settlement habitats. Researchers found that when they installed underwater speakers playing coral reef noise, the baby coral inevitably swam toward the speakers, even when the speaker was above them (the larvae have a natural tendency to swim downward).

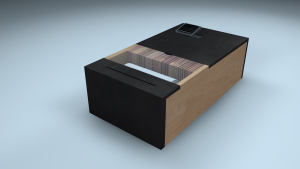

Aural Reef is a prototype of a telecommunication device that enables humans to communicate with coral and help in reef restoration through audio translation. A Max/MSP patch detects the presence and pitch of the voice, then alters and plays back a coral reef noise sound file so that it matches. The idea is that the more people speak, it will generate artificial reef noise which will draw larvae to deppopulated reef structures and assist in regrowing coral colonies.

I’m currently using a regular mic, but I want to construct an object that takes the sound of a person’s voice and outputs the coral reef translation to an underwater speaker. In a real setup, the speaker would be installed in a reef, tethered to a fiber optic cable and a satellite connection to the device.

Aural Reef is a project by Zoe Bachman, designed and programmed by Zoe with Aarón Montoya, co-programmer.

Classes

Temporary Expert: Design + Science in the Anthropocene