Ross Goodwin

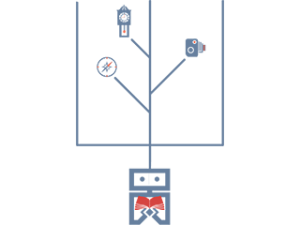

A camera, a compass, and a clock that generate stories using LSTM recurrent neural networks based on images, time, and location respectively

https://medium.com/@rossgoodwin/adventures-in-narrated-reality-6516ff395ba3

Description

Narrated Reality is my ITP thesis, a collection of machine intelligence “narrators” that produce poetry and prose based on their environments. The three devices I am creating will be a camera, a compass, and a clock that generate text based on images, location, and time respectively. The text in each case will be printed on large format thermal printers as the devices narrate their environments in real time.

To power the devices' generative capacity, I am training a number of LSTM recurrent neural network models on NYU's supercomputer cluster. However, I will require staging space to complete the physical devices.

I have been constantly fascinated by the possibilities of machines that can learn, and more so by the prospect of tools that could serve to augment our creative capacity. I imagine a future where I can produce a cohesive, textual story by compiling a series of related photographs, or by taking a long walk in my city, and I want to see those possibilities realized. Moreover, as a photographer and a writer, I want to combine two of my favorite mediums in way that redefines each experience as it creates one that's entirely new.

For XStory: I will be screening a film I made with Oscar Sharp, from a screenplay generated with the same LSTM technology that powers the three device: https://vimeo.com/165547246 (password: sunspring)

Classes

Thesis