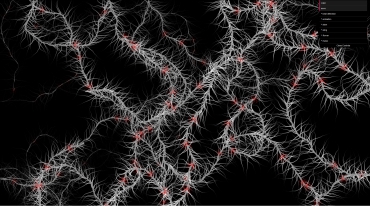

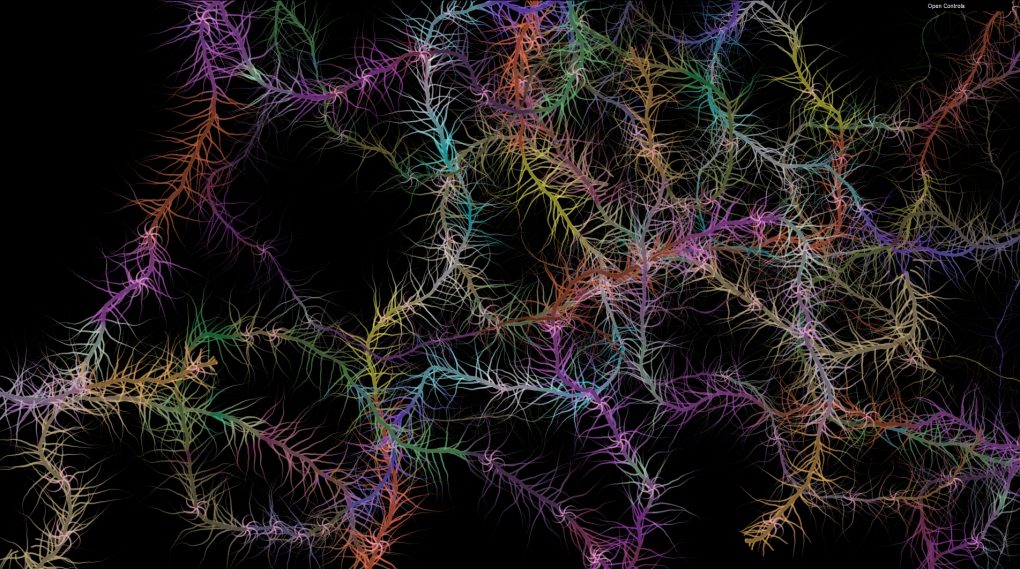

Calming constellation visuals with music using p5.js.

Hsiao Jui Lin

Description

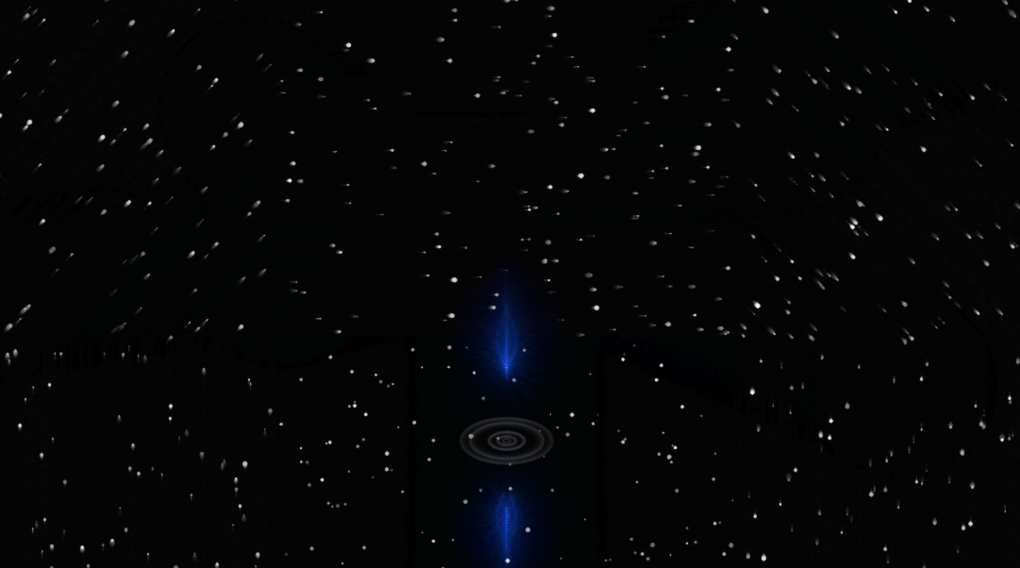

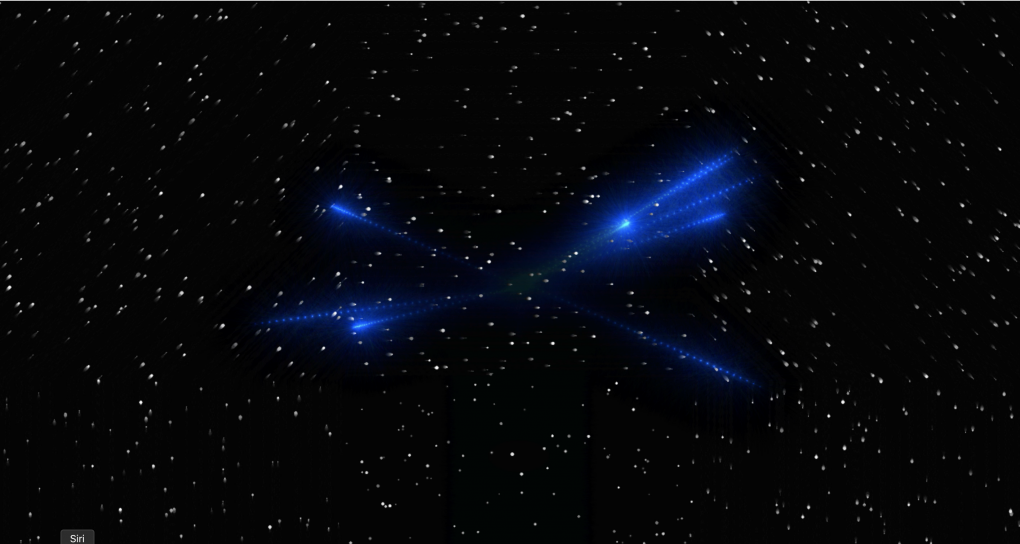

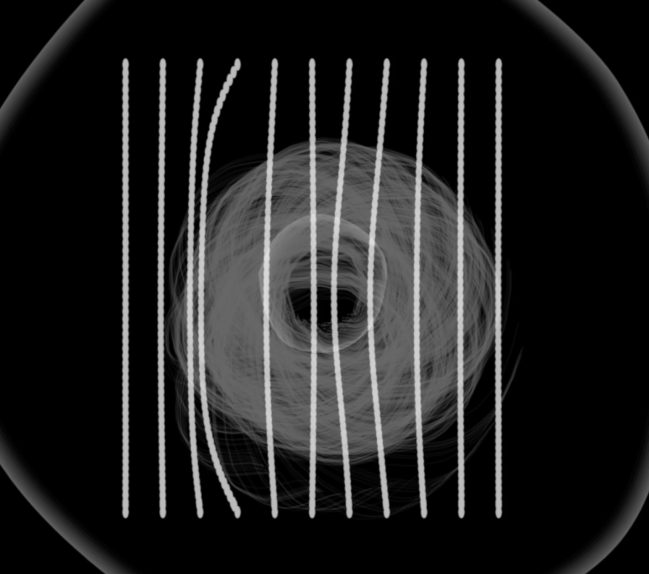

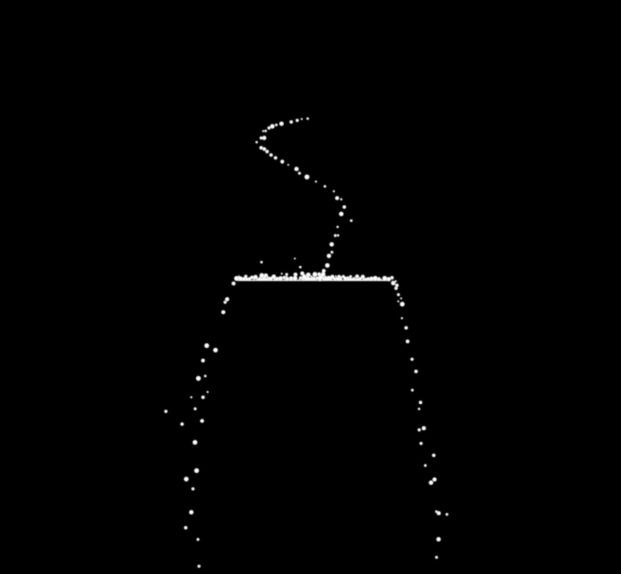

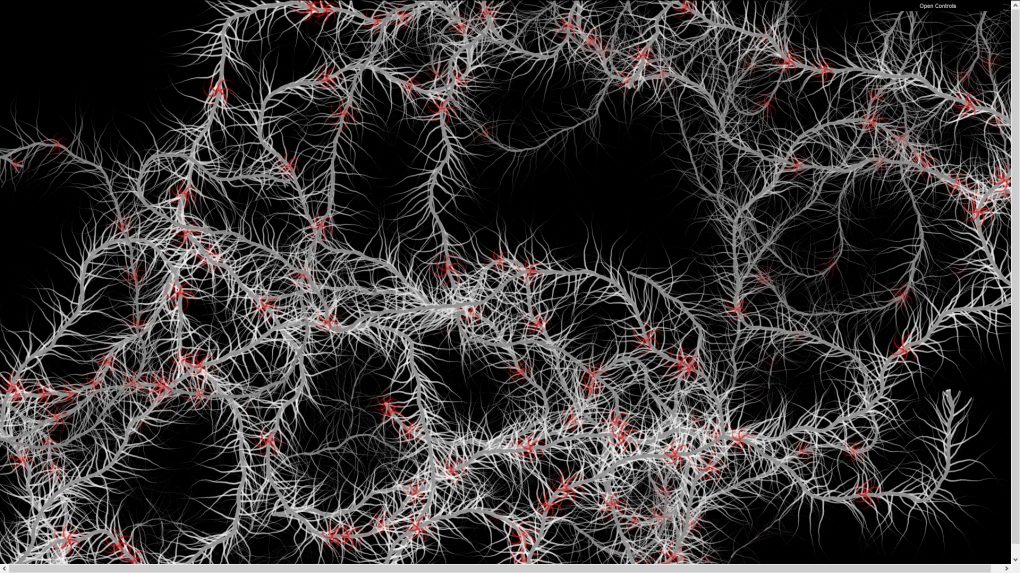

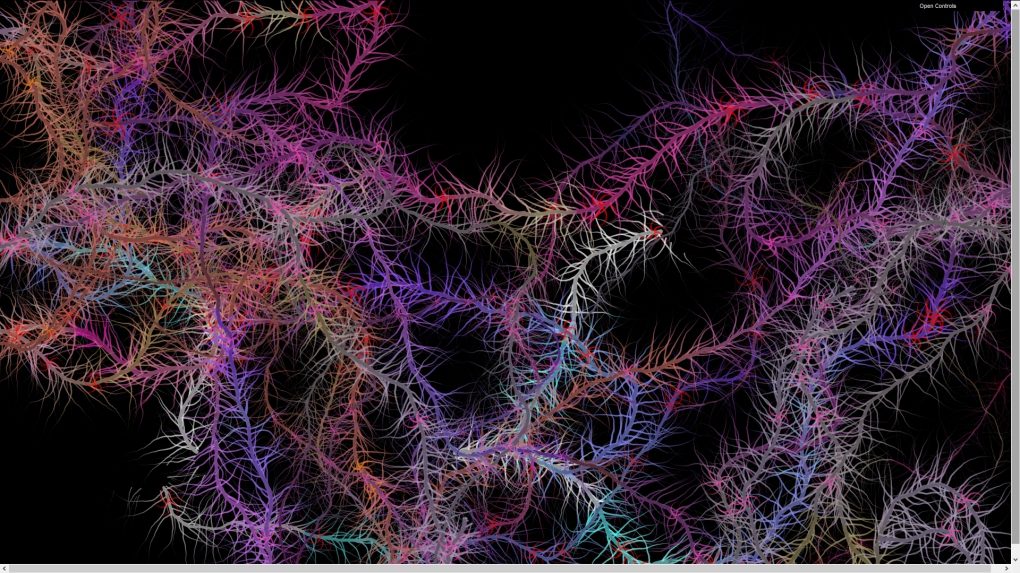

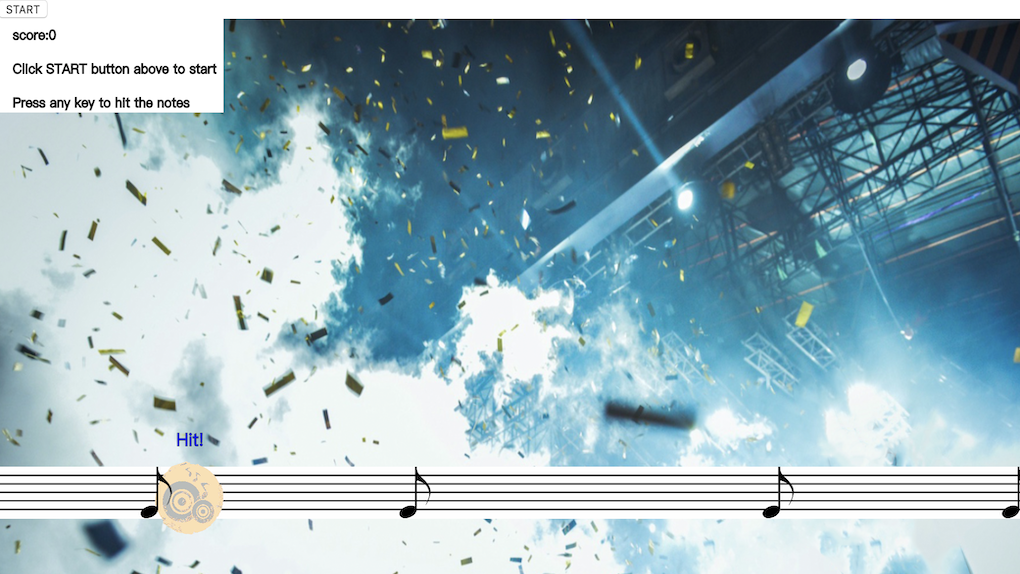

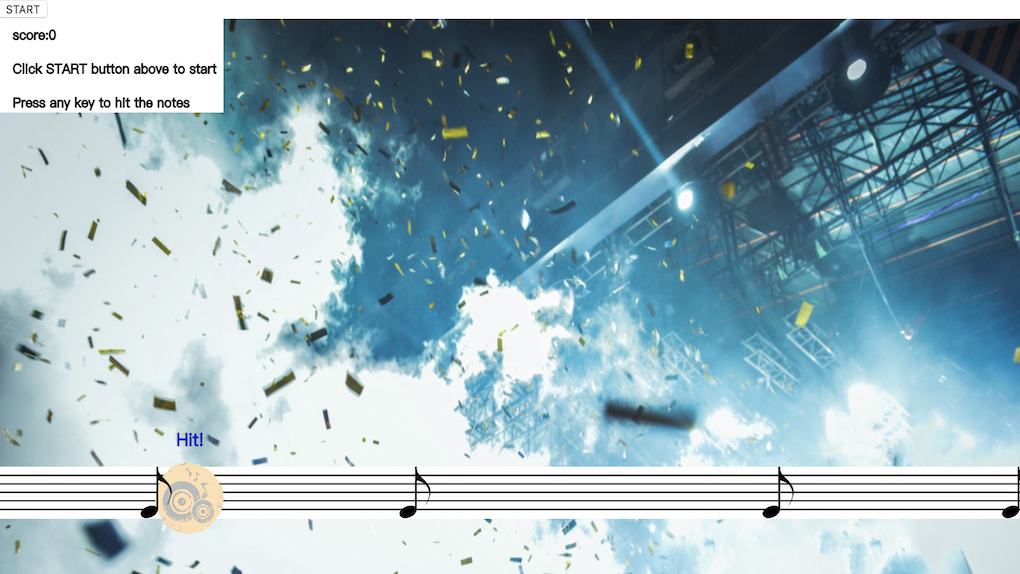

This project is mainly an animated visual representation of constellations. Its goal is to provide easy information on what the constellation 'Leo' looks like while creating a relaxing, calming atmosphere for users. This project is not yet complete. The completed version would hopefully contain all 12 zodiac constellations, a separate song for each constellation and more animation for the stars. The animation's deeper meaning is to convey the philosophical thought that the entire universe is an unending cycle by making the animation itself a loop. As for the technical aspect, this project encompasses the key concepts of Nature of Code, including objects, force, oscillation and autonomous agents.