Dhruv Damle, Jennifer Kagan, Viniyata Pany

An exploration of smell and sound, our project encourages students of Indian classical music to practice singing by filling the room with a delicious smell when they hit the correct notes.

Description

Most of us are accustomed to navigating the world using our eyeballs. If we think of the sense of sight as a muscle, we get regular, rigorous visual exercise as we stare into screens, navigate public spaces, and snap photos with our smart phones.

But what about our other senses?

This project came out of a desire to exercise and explore two of those underappreciated, underutilized senses: the sense of smell and the sense of sound.

As ITP alum Alex Kauffman wrote, “Smell is subjective, it’s ephemeral, and it’s not binary.” Interactions that involve smell are qualitatively different from interactions that involve our eyes.

Since much has been made of the relationship between smell and memory, as well as the relationship between smell and pleasure, we use scent as a positive feedback mechanism to encourage vocal students to practice singing.

Here's how it works:

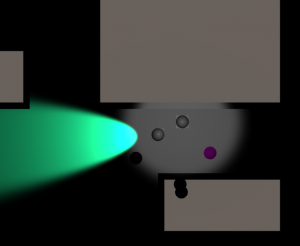

1. Select the note you want to practice by touching one of the circles. You'll hear a recording of the selected note and a recording of the tanpura for you to sing along to.

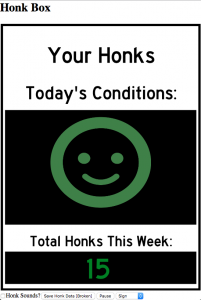

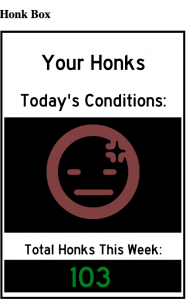

2. Sing! As you sing into microphone, the device determines when your voice is within the frequency range for the note you selected. When you're within range, the device dispenses a delicious smell.

Classes

Introduction to Physical Computing